4.1 Handover from acquisition/pre-ingest to ingest

4.1.1 The transition

If not done in pre-ingest, the data package needs checks on whether all necessary files are transferred, if the files are in correct formats and whether any data are sensitive/personal. First checks also can include verifying whether documents in PDF format open because they should not be read-only or encrypted. It is also essential to ensure that the dataset is eligible to be accepted by the repository (correct discipline, accepted kind of data [quantitative/qualitative], member of selected group of depositors, possibly quality of data, and other criteria that need be fulfilled according to the Data Collection Policy).

Once pre-ingest is finished, the ingest team is notified that the data can be handed over. Archives of different sizes might handle this takeover differently. Also, whether these steps belong to pre-ingest or to ingest is open to interpretation. The steps ingest, archival storage, access and preservation may not be clearly distinguishable in smaller archives, as the functions are linked and one or two experts may do all steps.

There are different ways repositories organise the data transfer from pre-ingest/acquisition agent to ingest staff and the notification about the transfer.

How we do it: Transition from pre-ingest to ingest

Notification that data is ready for ingest

- Option A: Automated notification through software system

- Option B: Email from pre-ingest agent to ingest agent

- Option C: Other form of notification

Transfer of data

- Option D: Downloading from data transfer system (archive material has been transferred directly from depositor to this system)

- Option E: Email the data (Forward)

- Option F: Download from server (where pre-ingest agent has stored it)

The transfer of the data via email is declining; it is not secure because malware can easily access emails and all attachments, as can other persons if your device is not properly locked. Not all email programs encrypt the email messages, and it is also possible that any network connection (e.g., connections between your email provider and your recipient) is not secure. The data transfer via email is still used because it is cheap, convenient, and fast. Email transfer can be made more secure by using end-to-end encryption so that nobody can access the contents of the messages.

After receiving the data and documentation material (in a secure way), it is best practice to make a backup copy of the Submission Information Package (SIP) folder before you start to work on the data. By doing so, the original data and/or documentation material cannot be changed accidentally.

The documentation of the ingest process starts here at the latest. Repositories usually have lists where all the data that are stored in the archive are registered. Important information could include, for example, the archival number, the title of the study, the contact information, the description of the data, and/or the publication data.

In order to keep track of the current status of a data set, it is useful to use a tracking system so that repository staff know whether a data set is in acquisition/pre-ingest or ingest stage or is already published or maybe in some backlog place. Repositories use different tools to keep track of this information.

How we do it: examples

- Project Management Software (e.g., Teamwork)

- Web Application

- Excel Lists (see Annex)

4.1.2 List of data and documents necessary for data deposit

The following list of examples gives an overview of what kind of files a repository might receive for archiving. The decision about which files are considered as mandatory or as optional varies from repository to repository.

Mandatory

- Signed contract (clarified property rights and signatory powers)

- Metadata information (if not already entered directly in platform)

- Data files

- Codebook

- Methods report

- Objectives and design: background of study, research problems that study addresses, source of funding

- Target population and sampling: criteria based on age, gender, residence; describe sampling frame and completeness, sampling unit and method of choosing sampling units

- Mode of data collection: explain survey modes

- Instrument: if survey, then content of questionnaire, discussion of scales, question techniques outlined (i.e. implicit attitude measurements)

- Fieldwork: depends on data collection method, institution responsible for data collection (also subcontractors if these exist), dates of fieldwork period, total number of respondents, descriptive statistics on duration of survey

- Response rates: compare demographic characteristics of sample with that of population, measures to increase response rates should be reported (e.g., incentives, information material, …)

- Data processing: data cleaning and editing, quality assurance measures, respond if cases deleted and why, report weights (and their construction), description of recoded or constructed variables

- Data protection and ethical issues: informed consent, how was it obtained, data anonymisation

- Instruments of data collection (e.g., questionnaire with interviewer instructions, information material for respondents, …)

- Informed consent form (if applicable)

Optional

- Project report

- Data Management Plan (DMP) of project proposal

- Project proposal

- Interviewer guidelines

- Interview cards etc

- Documentation about incentives, contacts, …

- Recoding protocol

- Syntaxes

- Coding instructions

- Showcards

Sometimes there are issues that need a discussion with the depositor before they submit the data to the repository. This can be the licence agreement because data checks in ingest might differ between open access data and scientific use data. For the ingest agents it might also be important to know about time pressure to publish the data quickly or simply reserving a DOI (digital object identifier) for the depositors. The ingest agent additionally needs to know if the data shall be stored in a certain “collection” or sub-category in the archive solution (e.g., in an ‘own’ dataverse or maybe ‘public’ dataverse).

4.1.3 Data types and formats used in community

Your repository staff knows best which data formats are used in the designated community. The most common data analysis programs for quantitative data are Stata, SPSS, SAS, R, and Excel. You should think about which data formats you want to offer to the community and find out whether your staff is able to generate these data formats. Then, a list of these most commonly used formats should be generated by the staff and hence can be disseminated among the community. It is possible that additional formats are generated to be stored in the archive as long-term formats.

Example: It is possible that the most commonly used formats of quantitative data are Stata and SPSS. So, these formats are generated for dissemination. Additionally, a long-term format could be a Comma Separated Values (CSV) file. A .csv file is a plain text file that contains a list of data and is very easy to handle. So, the CSV file only remains in the archive and the Stata and SPSS files are distributed among the research community.

For qualitative data this decision would be different, according to the programs used for analysis. Maybe a simple PDF/A file would be the easiest to archive because it is easy to access. Interview transcriptions mostly are done in text programmes, so archives need to be able to handle Microsoft Word (.doc and .docx), Open Document Text (.odt) and/or plain text files (.txt).

4.1.4 Preferred formats

Your repository might have preferences on the data formats in which the depositors should hand over the data. Only if your staff can handle the transferred data easily, a smooth ingest process is ensured. The UK Data Service (n.d.) provides both recommended and acceptable file formats.

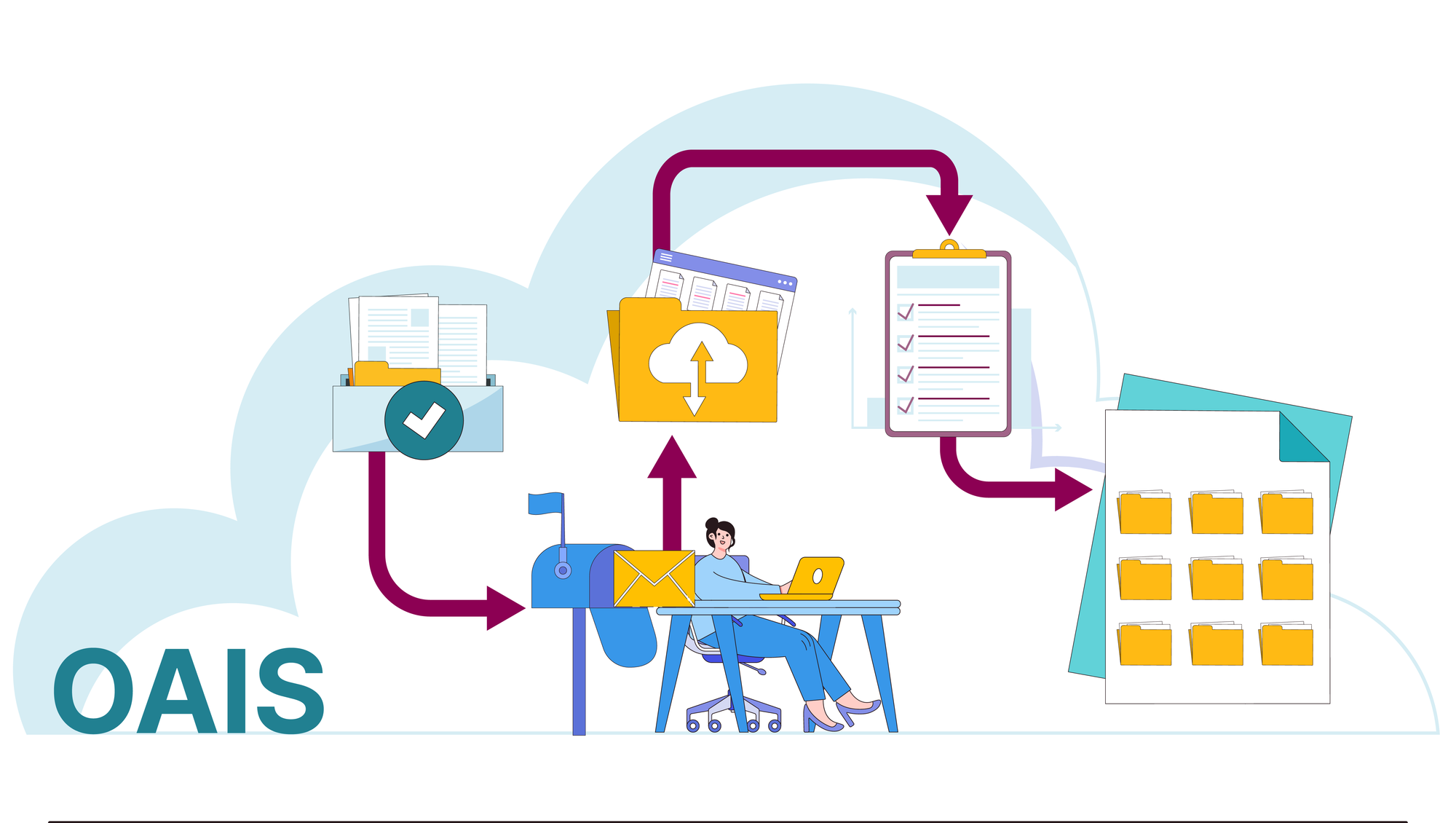

OAIS Model: Reference to ingest

The process of ingest activities is defined within the functional model of the Open Archival Information System - OAIS (see also Chapter 1.8).The repository stores all necessary “Preservation Description Information” in the specific folders following the OAIS standard. All information the repository receives from the depositor is stored in the Submission Information Package (SIP). The Archival Information Package (AIP) is generated out of the SIP, all files necessary for the process of preservation of the data (data transformation processes, checks, and final storage) are stored in the AIP. So, ingest activities touch all three information packages, from submission (SIP), over all relevant files needed for preservation of the relevant data (mainly stored in AIP) to dissemination of the data (Dissemination Information Package, DIP). It is also possible that the DIP is only handled by the preservation and/or access team. This procedure varies from archive to archive.